A while back I worked with a guy named Phil. Often we’d have situations where teams would suggest bolting on security at a later stage rather than fixing the underlying problem, and he would always clap back with “you can’t bootstrap trust” and thats what I wanted to talk about today. Trust has to be end to end, if any link in the chain is weak, the whole thing collapses. You can build on a rocky foundation, but it’s going to reduce the security of the control and lead to gaps in your design that are impossible to plug.

The Endpoint Example

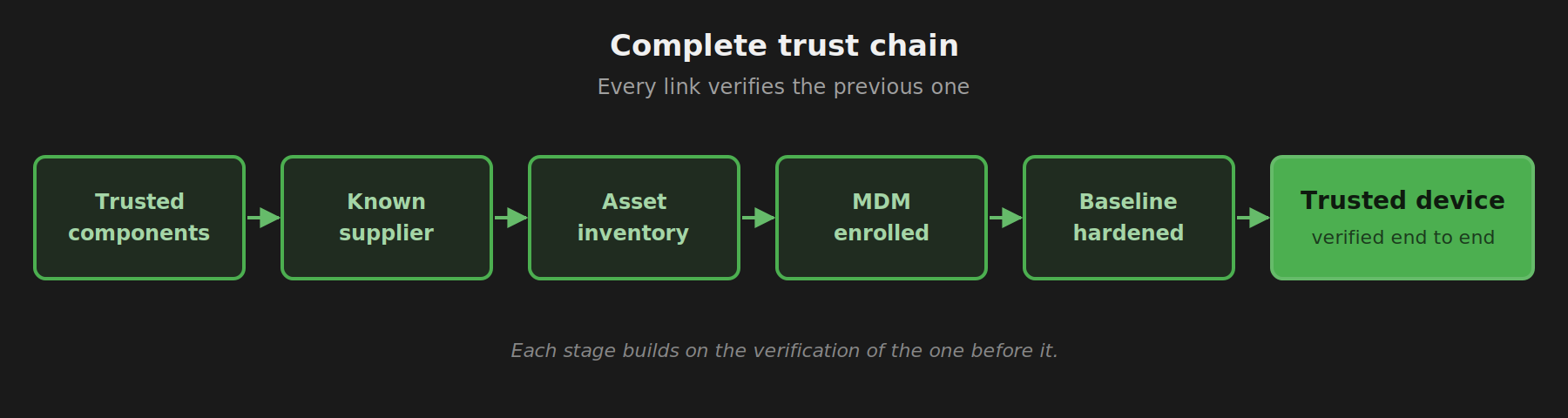

The cleanest example of a trust chain in security is endpoint management. To get a genuinely trusted, managed endpoint you need a few things to happen in order:

- The device’s components are sourced and built by known, trusted manufacturers.

- The device is bought from a known supplier.

- It lands in your asset inventory before it ever touches a user.

- It’s enrolled into MDM, tied to a specific person.

- It’s brought up to your baseline security standards. Disk encryption, EDR, OS version, the usual.

Break any link in that chain and the trust falls apart. The device might still look managed, but you can’t actually verify it’s the device you think it is, owned by who you think it’s owned by. For most organisations this isn’t a huge deal day to day, and only places like Apple, Google and Amazon really obsess over the early stages of the supply chain. But there are cases where it matters.

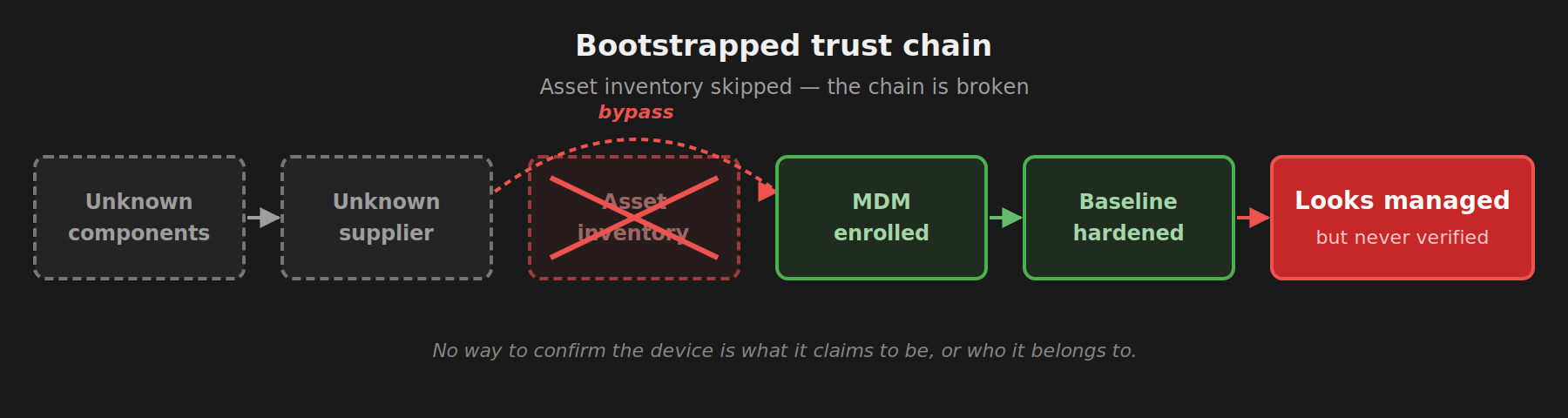

The bootstrapped version of this looks similar on paper, but it isn’t the same thing. Imagine your MDM allows open enrollment with no asset inventory check. A staff member’s personal MacBook can join, get all the policies, and look identical to a corporate device in your console. And it might not even be a MacBook. It could be a VM, someone faking user agent strings, or something else entirely. You’ve got no way to verify. You’ve created trust where none exists. The device hasn’t been verified, the ownership hasn’t been verified, and unless someone physically lays hands on it and confirms what it is, it can never truly become a managed asset. You’ve just got something that looks managed.

AI Has The Same Problem

Right now everyone is building layers on top of AI rather than building security into AI. You end up with an ecosystem with no foundational trust, where the burden of verification falls on individuals doing manual checks at runtime.

There are really three ways to get preventative controls in front of AI today:

- Access control, which is limited because OAuth and API keys don’t get granular enough. It’s good and you should do this, but it’s limited in how far it’ll get you.

- Sandboxing, which works but needs dedicated infrastructure and strict rules about what can run, where, and when. Sandboxing is great, but it’s a heavy lift and isn’t suitable for every situation.

- Proxies, which are the easiest entry point. You don’t have to migrate all your agents into one environment first, which is the hard part of sandboxing. People are using this to add governance, but also an identity layer where the access control portion falls down.

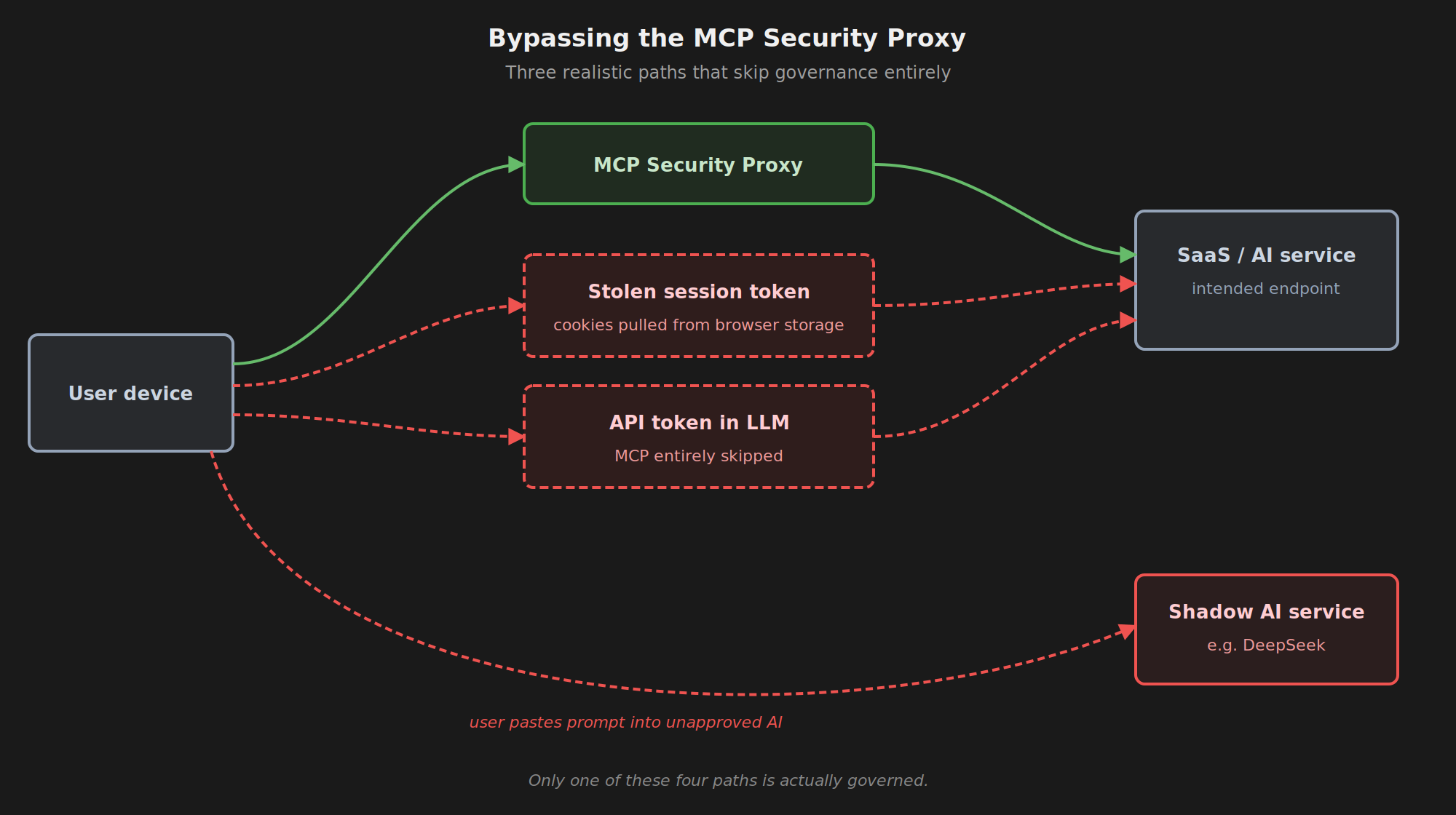

The first two are heavier lifts, so most teams end up at proxies. The catch is a proxy only works if everyone actually uses it, and proxies are easily bypassed unless you have strict controls at every layer underneath. For the proxy itself to mean anything, you need:

- URL filtering, so people can’t just hit the online tool directly in a browser.

- Shadow IT detection, to catch when they do it anyway.

- Application allowlisting, so unapproved AI apps can’t run on the device in the first place.

- API key hygiene, so users can’t grab a key and pipe it straight into whatever AI tool they want, going around the proxy entirely.

Each of those is a separate control that has to hold for the proxy to be meaningful. This is the definition of bootstrapping trust. You’re trying to build a managed AI surface on top of a foundation that wasn’t designed for it.

Below is a crude diagram of just a few ways this is possible if you don’t have the other controls in place, with a couple of MCP and LLM based examples.

But It Catches 80% Of People

If you work in security, you might say “look, most people won’t bother working around the controls. Even a leaky proxy still protects 80% of staff, and that’s fine.”

The problem is that people gravitate to whatever is easy. Unless your proxy is genuinely easier than the unmanaged alternative, you’re going to get complaints, productivity will take a hit, and your most senior or most technical staff will be the first to find ways around it. The maintenance burden on your team also grows. You’re now managing more proxies, more network infrastructure, and more edge cases just to hold the existing controls together. The 80% you’re protecting often isn’t the 20% that actually matter and these are the people at the company others actually learn from.

A lot of tools in this space are trying to bootstrap trust into AI workflows, and that’s normal in a new, fast-moving area. We’ve been here before. VPNs before SaaS matured. Thin clients in enterprise. DMZs in hosted environments. Most of these patterns died off as the control plane moved upwards, closer to the identity or application layer where it belonged. An extra hoop to jump through is always worse for speed, reliability, and UX. It’s only ever a stopgap.

Where That Leaves You

If you’re a security person trying to secure this new wave of stuff, it’s worth thinking about whether the more foundational pieces are worth doing first. Don’t layer on a new proxy if 50% of your staff will just work around it. I’ve even seen places with strong controls in place still have significant issues with AI. In many cases, if AI can’t access something, it’ll work around it. A common pattern is writing a script that pulls session cookies straight out of the browser’s local storage. A considerably worse outcome for security.

There’s also a reasonable case for doing nothing. If you think the platforms will solve this in the next 12-18 months, investing heavily in stopgaps now might not pay off. A lot of that effort gets eaten by the platform shift when it lands. If you do end up buying a tool though, go in knowing it’s a 1-2 year solution rather than a permanent fix. Cut some risk now while you wait for the platforms to catch up.

If you’re a vendor building tools in this space, you’re probably going to run into a few issues:

- Big enterprises are the only ones with the foundational controls in place to actually make this stuff work well.

- SMBs might buy your tool, but it’s going to be a leaky bucket for them. They might catch on over time, or they might never realise. Either way, being dependent on other foundational tools to provide your security isn’t a great place to be as a vendor.

- This isn’t a unique problem, and there’s a lot of interest in securing agents right now. Both the AI model providers and the bigger platform vendors are working on it in different ways. Expect some customers to buy in for a year or two and then churn when the platforms catch up.

This is a market where the control plane and the governance plane are going to converge. Right now vendors are grabbing slices of each, things like proxies, scanners, and agent-specific controls. Over time though it all rolls up into a single platform. If you’re going at this as a long-term venture, solving just one slice probably isn’t going to be enough.

Looking Forward

It’s hard to predict exactly how this plays out, but I think AI follows the same path. As controls get built into the platforms themselves, the trust chain stays intact end to end and there’s no need to tack anything on top. The control plane will move upwards like it always does, closer to those identity controls and further up the stack to the application layer.

For now though, if you want to actually protect this stuff today, the answer is the same as it’s been in every other emerging area: proxies, layered controls, and a fair amount of bootstrapped trust. It’s not ideal, but it’s where the market is.